The simple linear regression is a predictive algorithm that provides a linear relationship between one input (x) and a predicted result (y).

We're taking a look at how you can do it by hand and then implement a function in JavaScript that does exactly this for us.

The simple linear regression

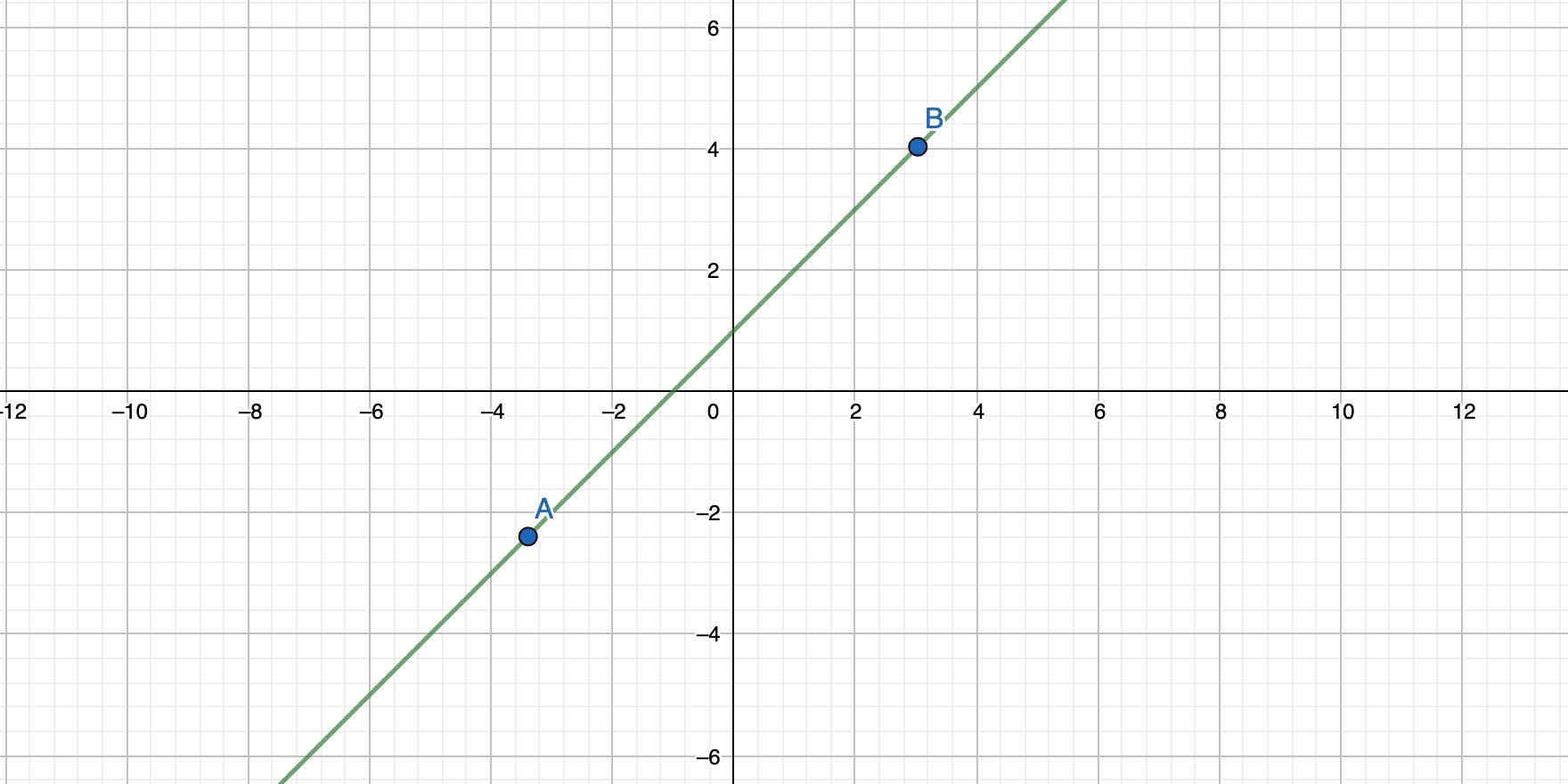

Imagine a two-dimensional coordinate system with 2 points. You can connect both points with a straight line and also calculate the formula for that line. And that formula has the form of y = mx + b.

b is the intercept. It's the point where the straight line crosses the y-axis.

m is the slope of the line.

x is the input.

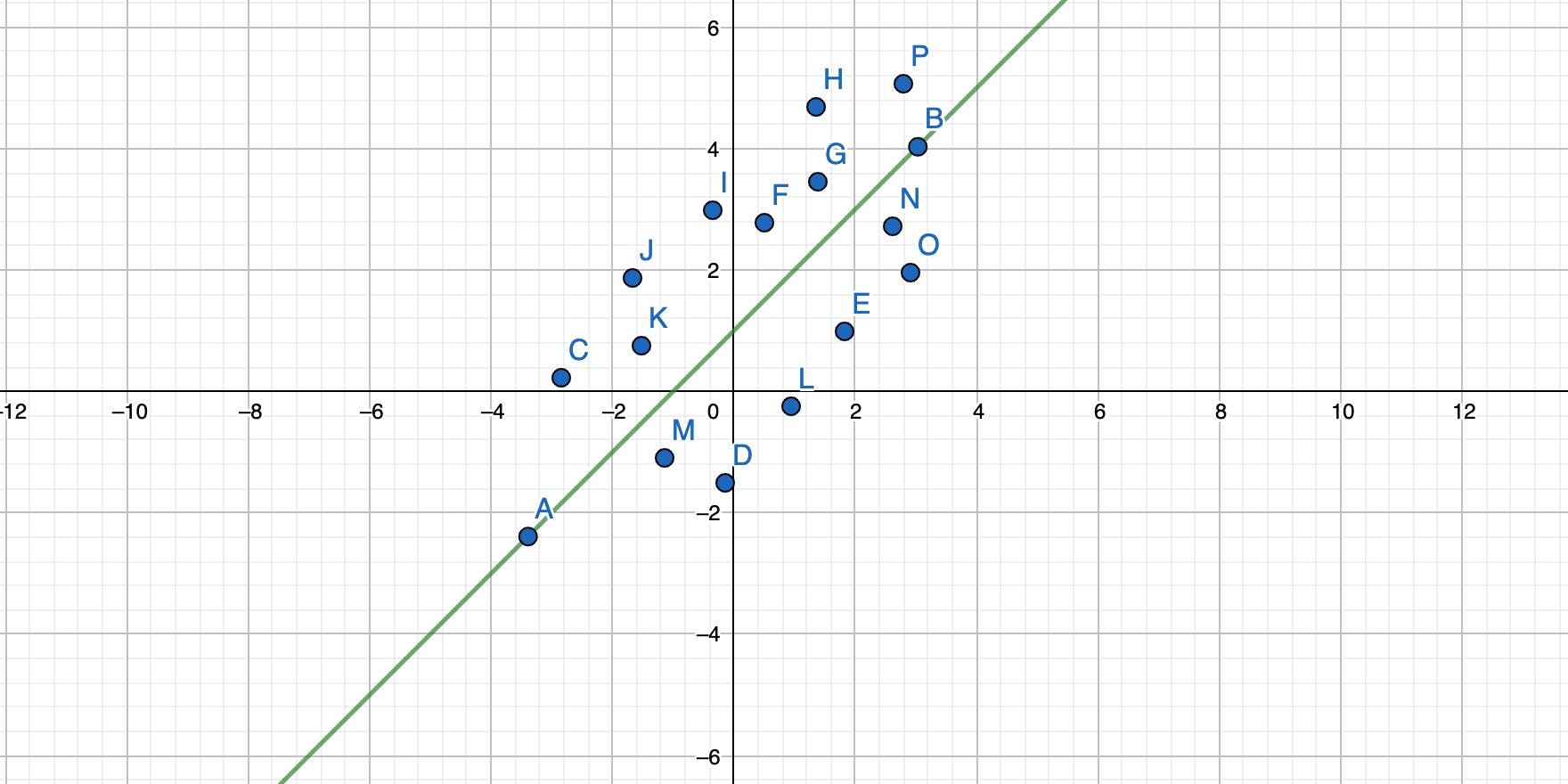

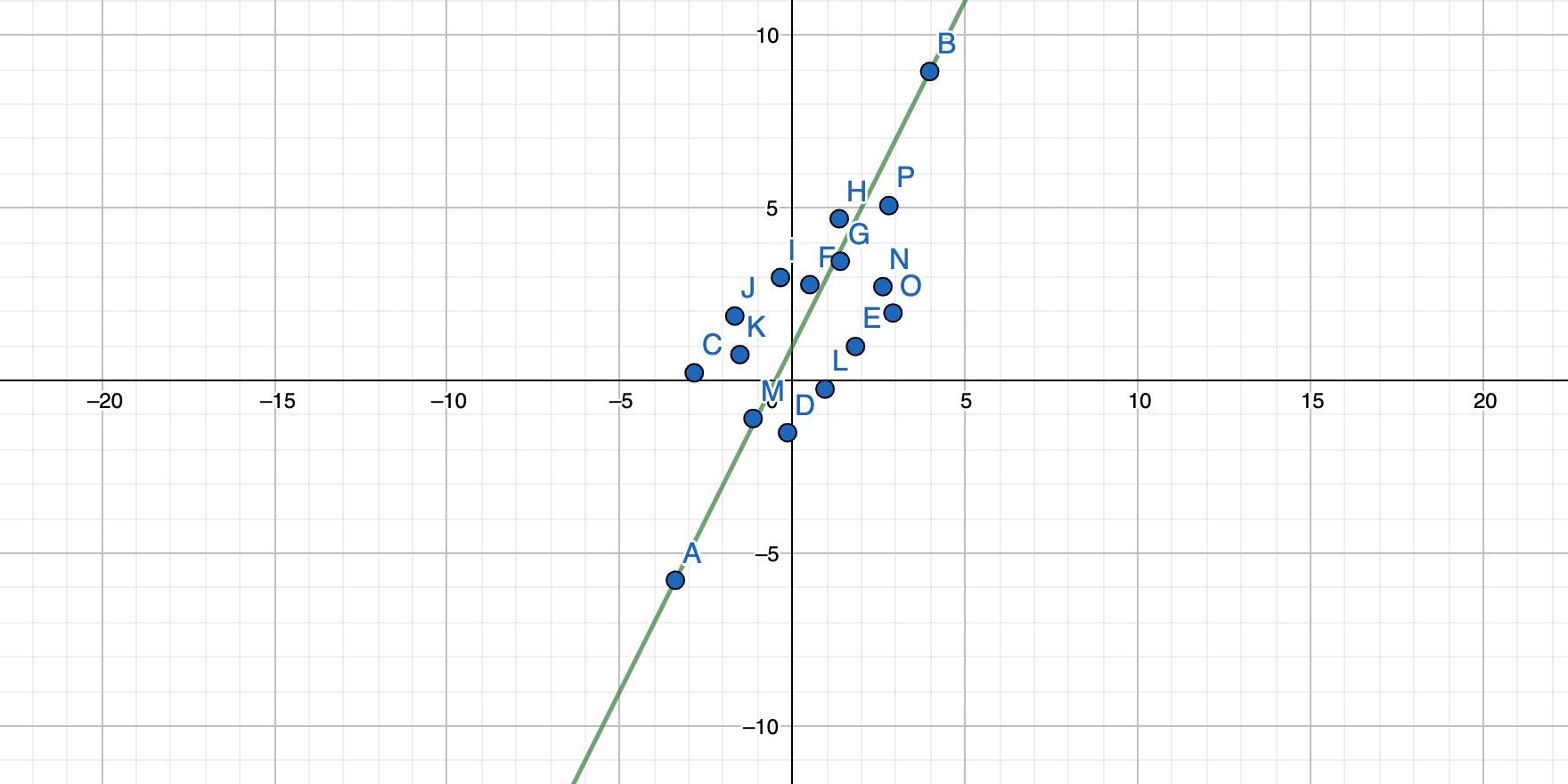

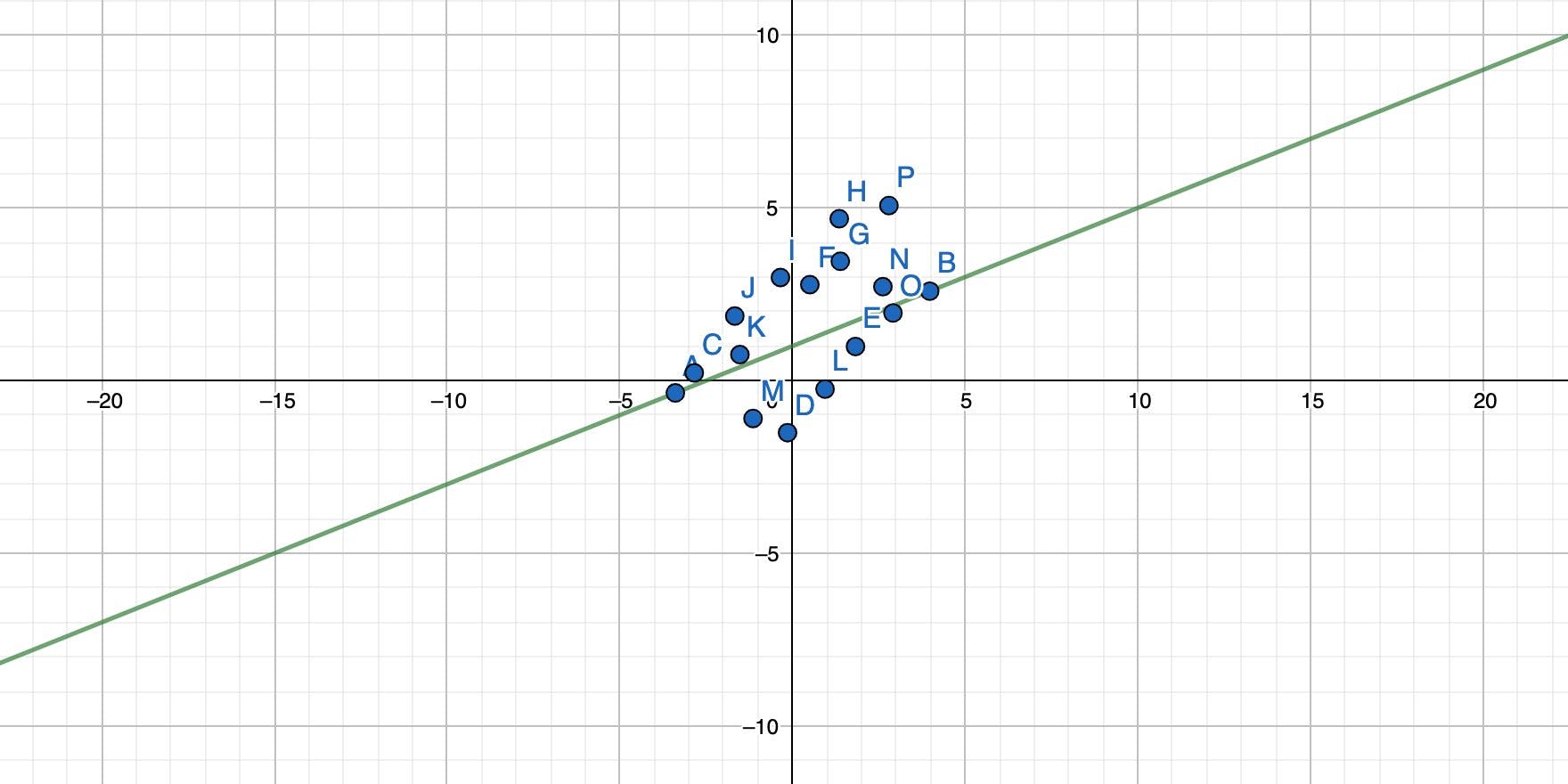

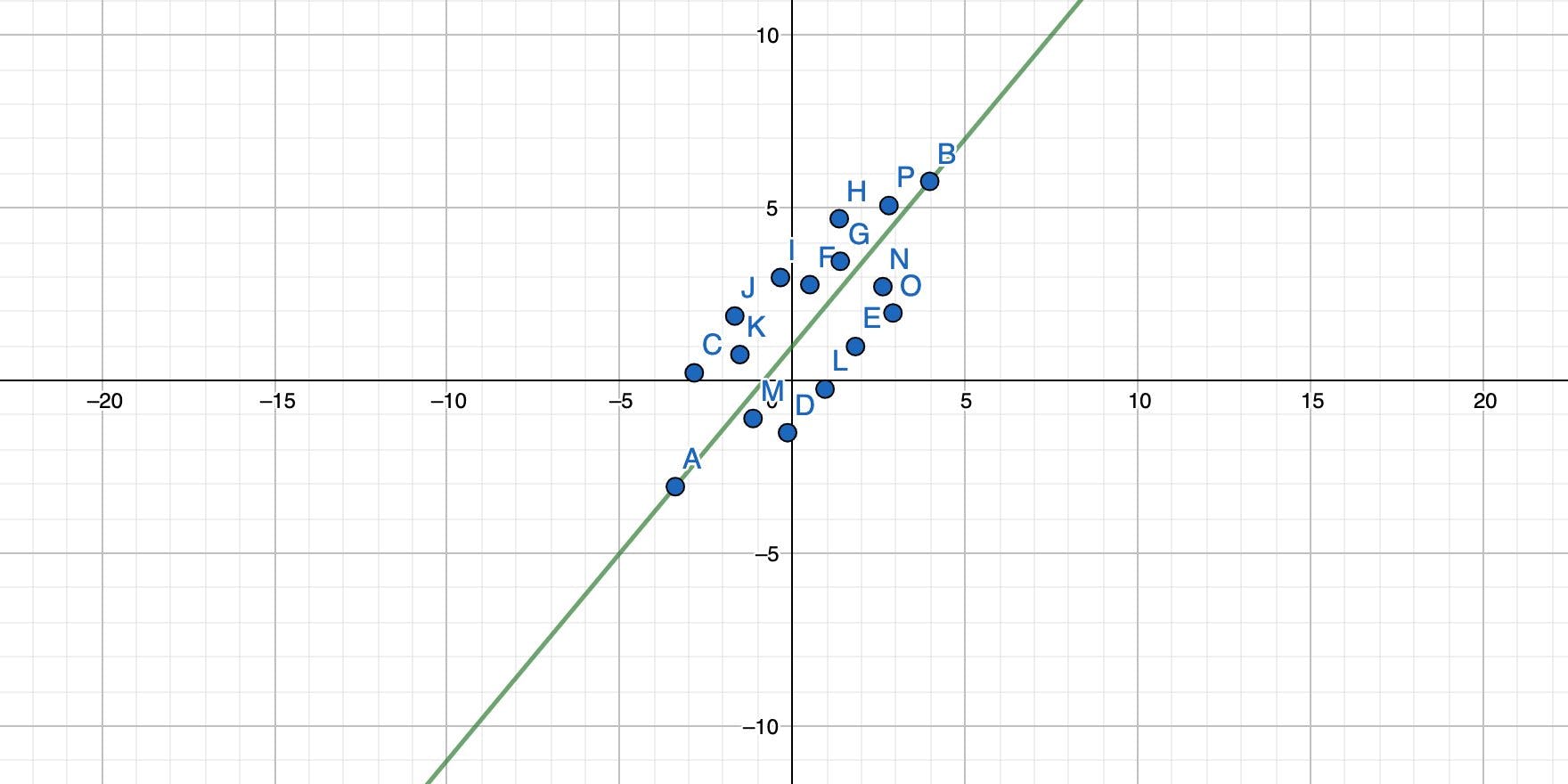

With only two points, calculating y = mx + b is straight-forward and doesn't take that much time. But now imagine that you have a few more points. Which points should the line actually connect? What would its slope and its intercept be?

Simple linear regression solves this problem by finding a line that goes through the cloud of points while minimizing the distance from each point to the line overall as far as possible.

Or in other words: Find the best possible solution while, most likely, never hitting the exact result. But that result is near enough so that we can work with it. You basically rotate the straight line until all points together have the minimum possible distance to the line.

The result is a function that also has the form y = mx + b, and each x passed into this function yields a result of y, which is the prediction for this particular input.

As you might be able to guess already, the simple linear regression is not a fit for all problems. It is useful when there is a linear relationship between your input x and the outcome y but way less useful when that relationship is not linear. In that case, you're better off using another algorithm.

Some math

You can't get around math if you want to understand how the simple linear regression works but I'll spare you most mathematical expressions, and only supply what's really necessary.

To make things easier, I'll use Excel to show you the math and provide you an example scenario. You can follow along (Google Docs works, too) if you like.

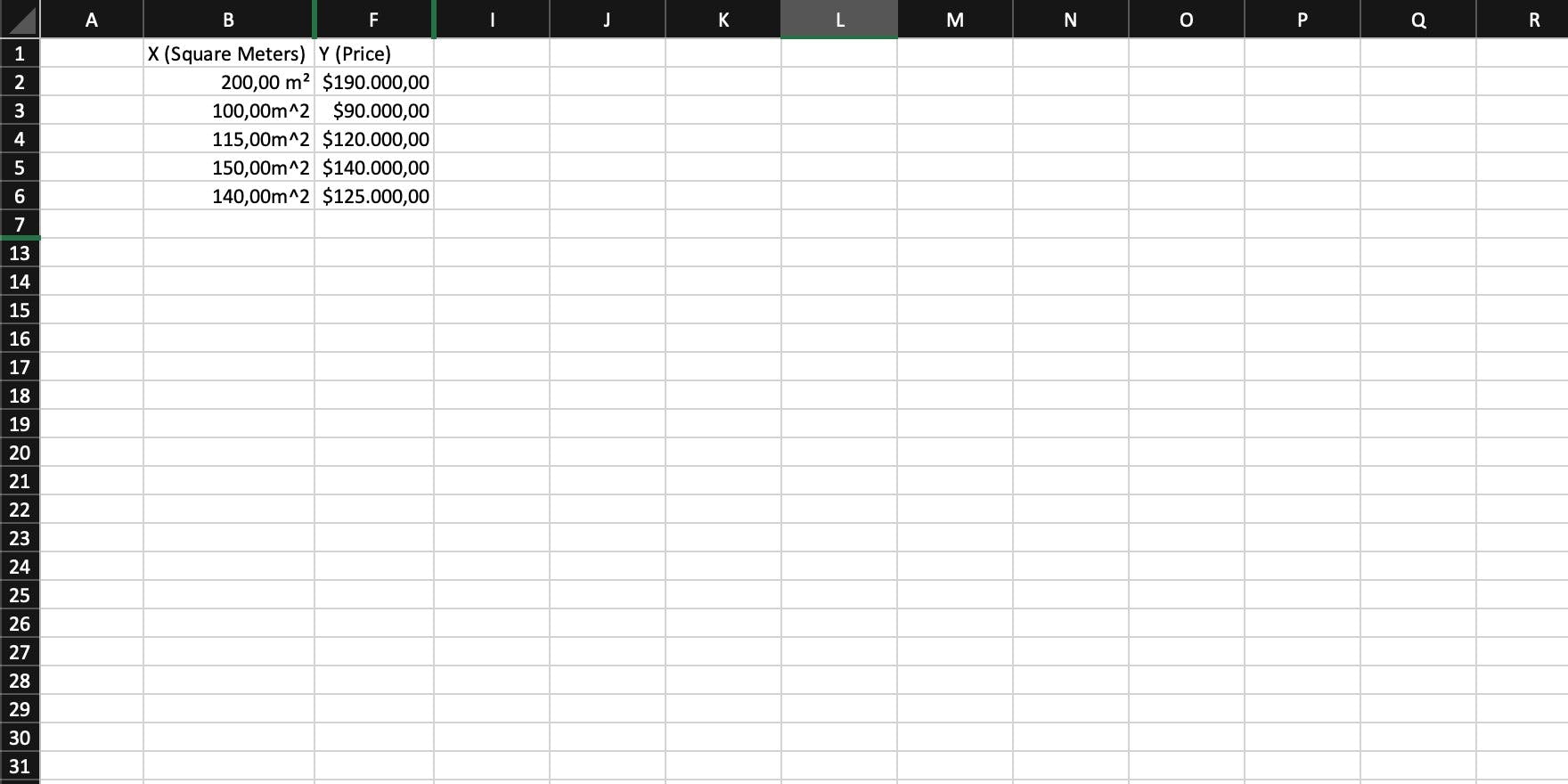

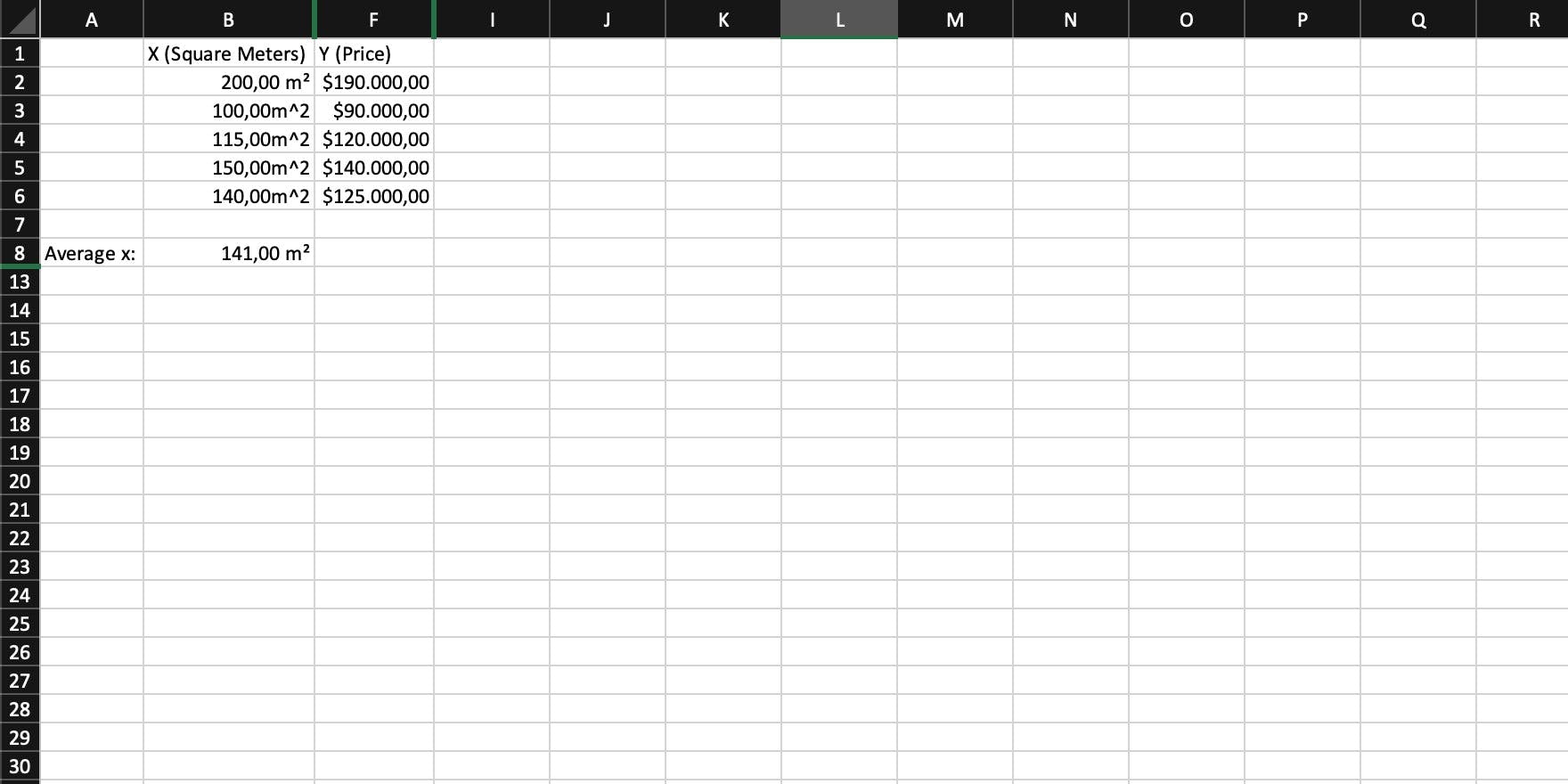

In this case, we assume that the area (in square meters) of a house directly affects its price. This scenario ignores that there may be more input variables affecting the price, like location, neighborhood, and so on. It's only very basic, but should be enough for you to understand the simple linear regression and the math associated with it.

Start

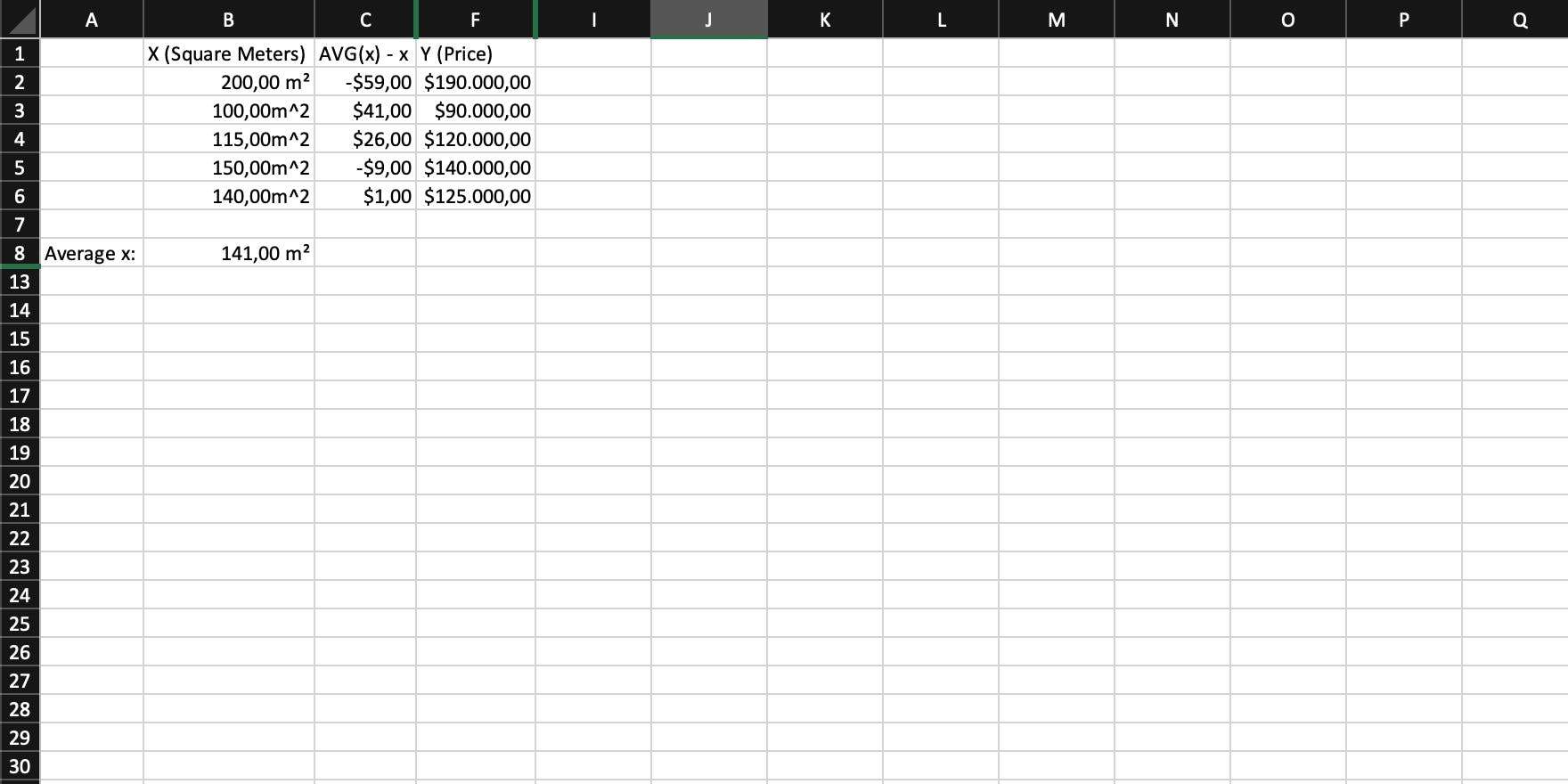

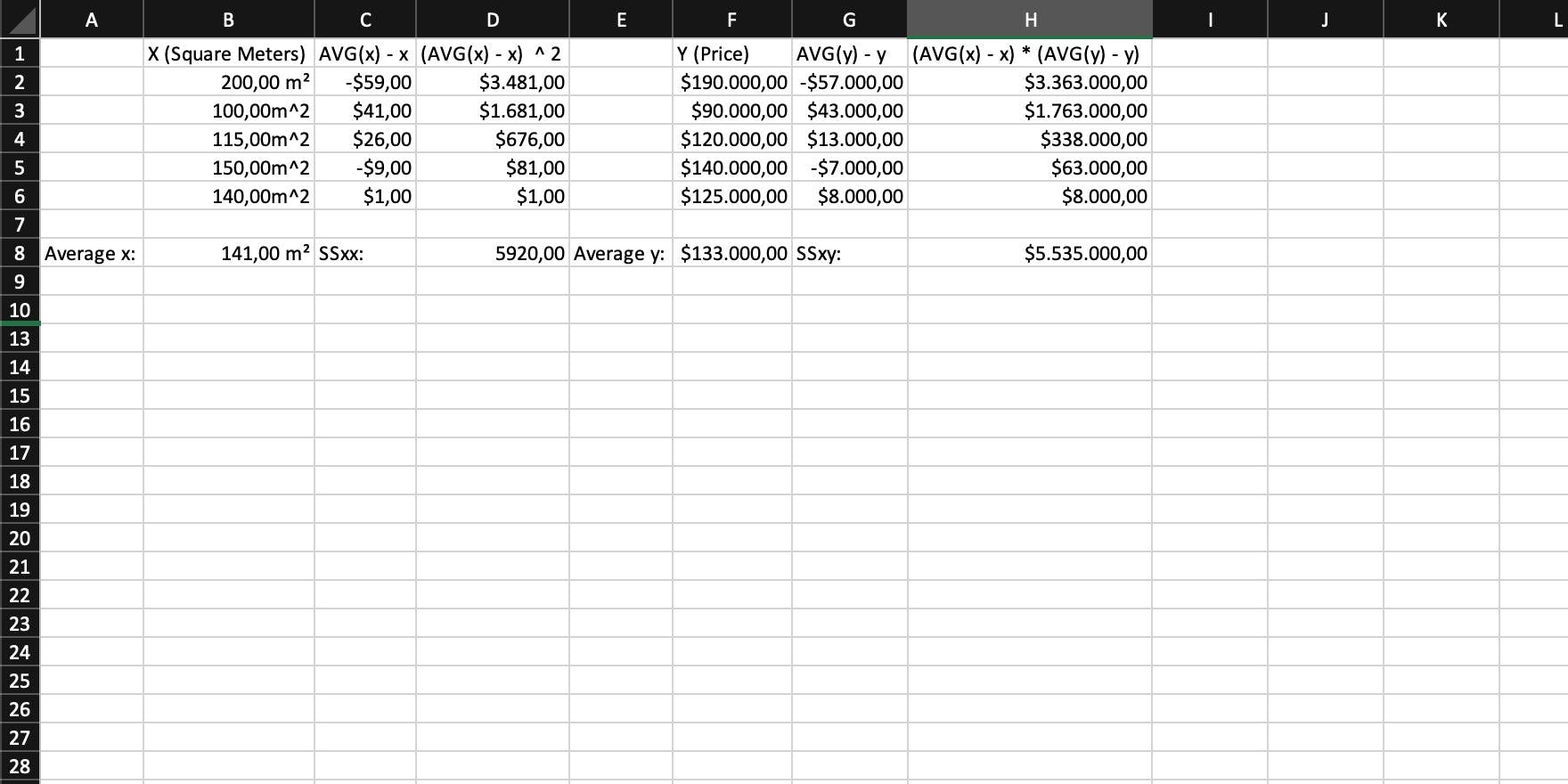

The starting point is a collection of house sales, listed as the area in square meters, and the price the house sold for.

Step 1

Calculate the average of x. Sum up all values and then divide that sum by the number of values you summed up (or simply use an AVG function).

Step 2

You now need the difference of each individual x to the average of x. In other words: For each x, calculate AVG(x) - x.

Step 3

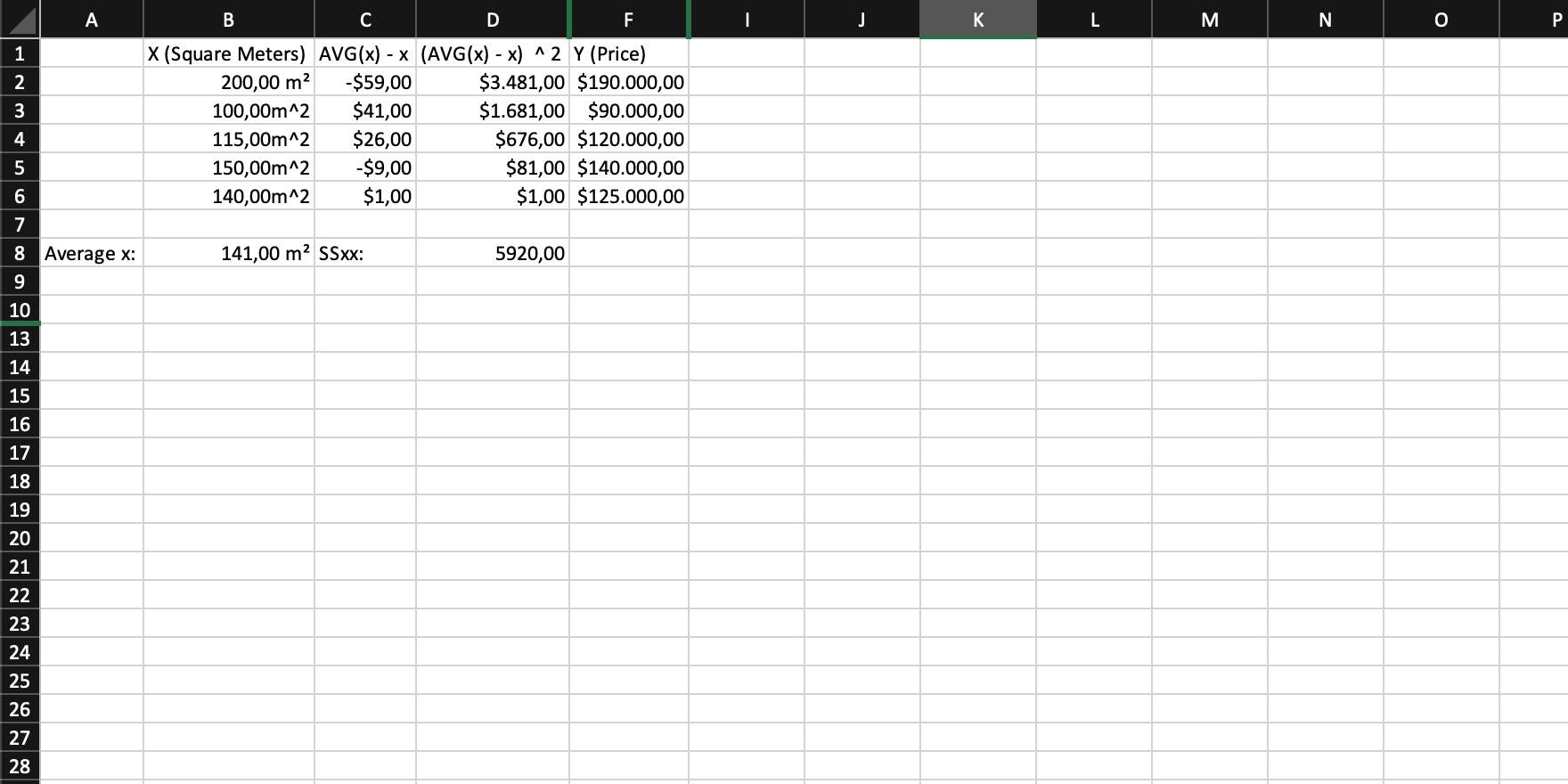

Calculate the variance of x, called SSxx by:

- Squaring the difference of each x to the average of x

- Summing them all up

The variance basically states how far observed values differ from the average.

Step 4

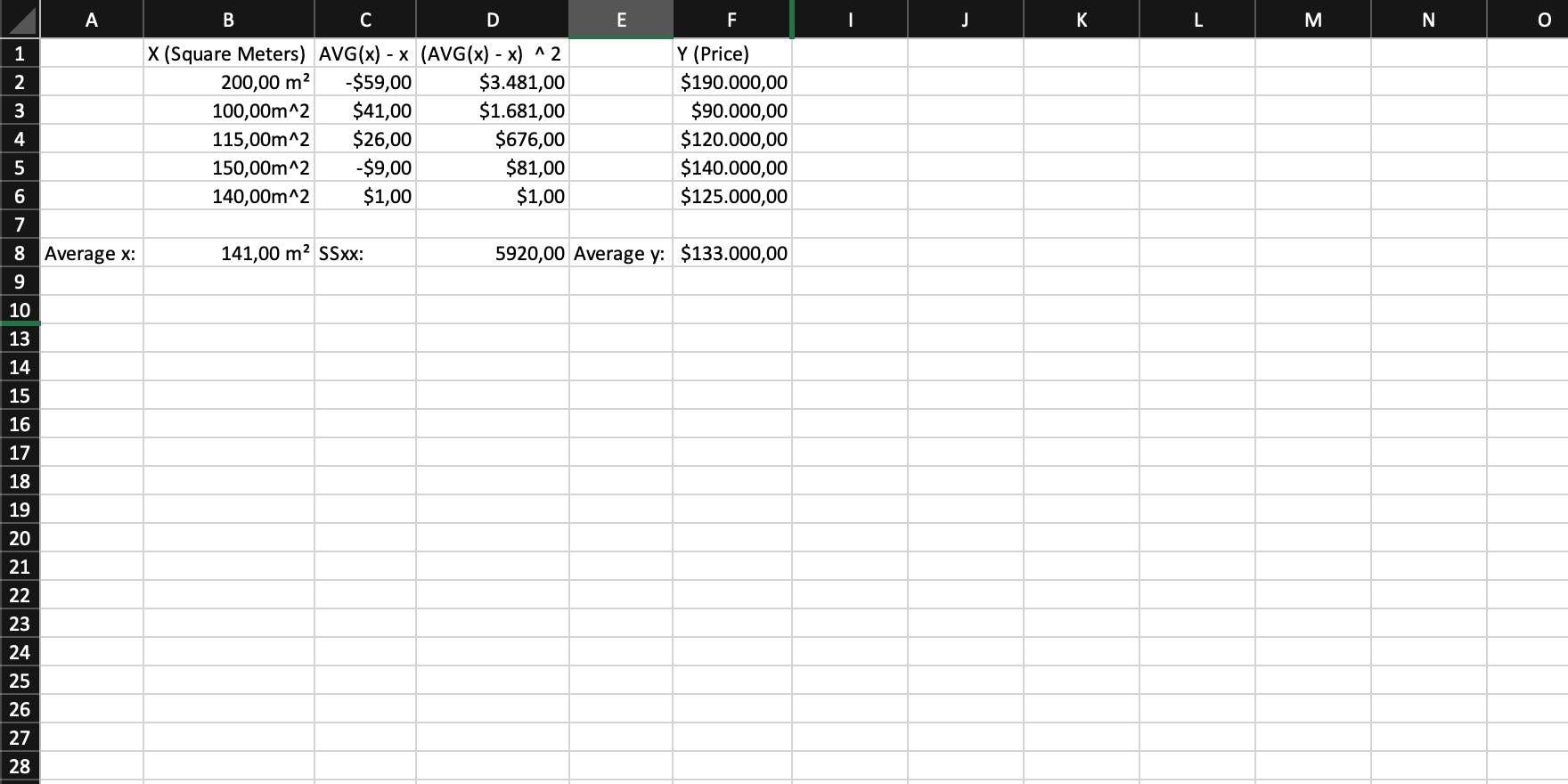

You now need the average of your y. Like you already did for x, sum them all up and divide that sum by the total amount of values (or use an AVG function).

Step 5

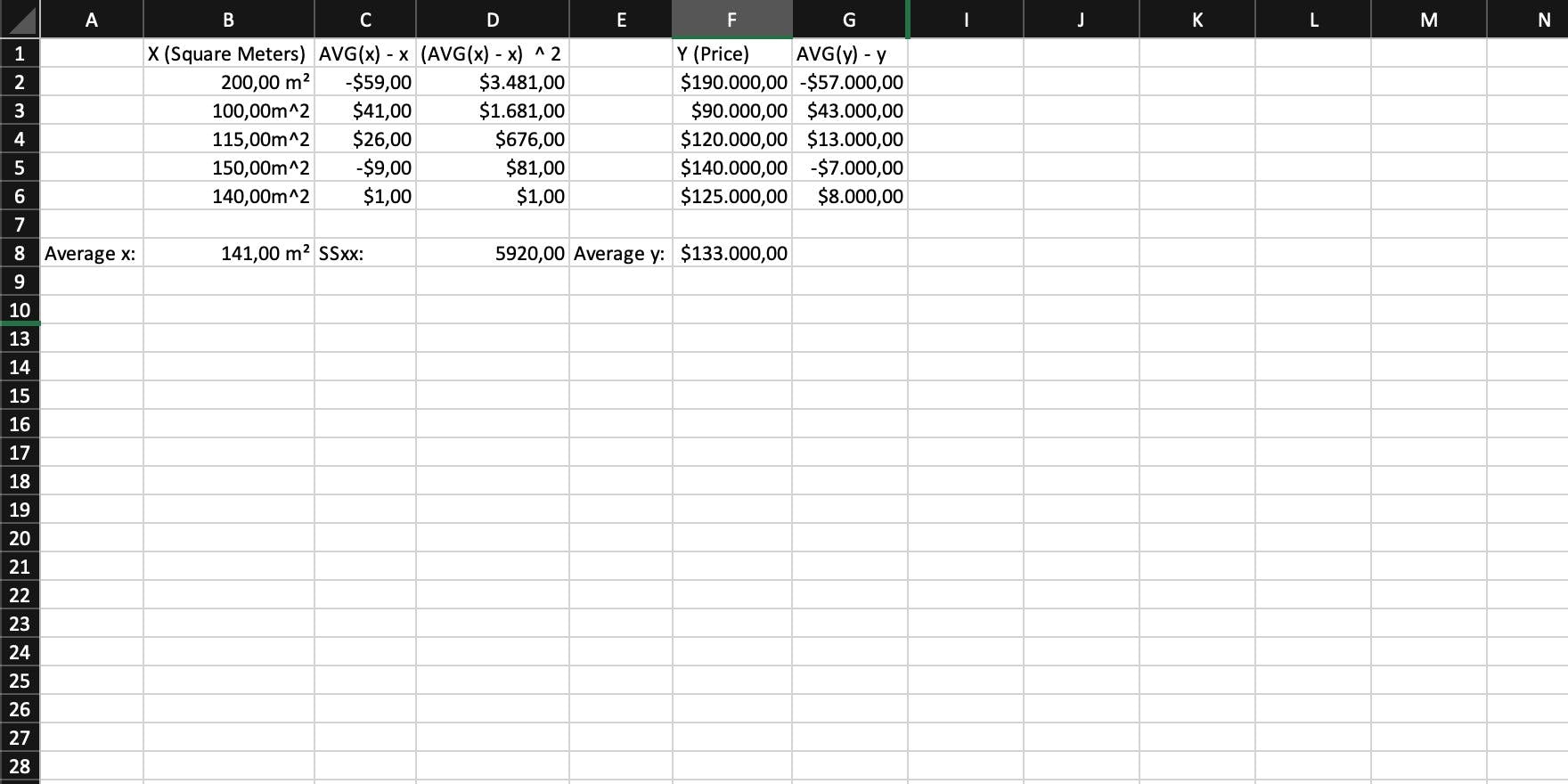

Calculate the difference of each y to the average of y. In other words: For each y, calculate AVG(y) - y (Yes, this is step 2 but for y).

Step 6

Now multiply the individual differences of x/y to their respective average and sum them up. This is SSxy, the covariance of x and y.

Step 7

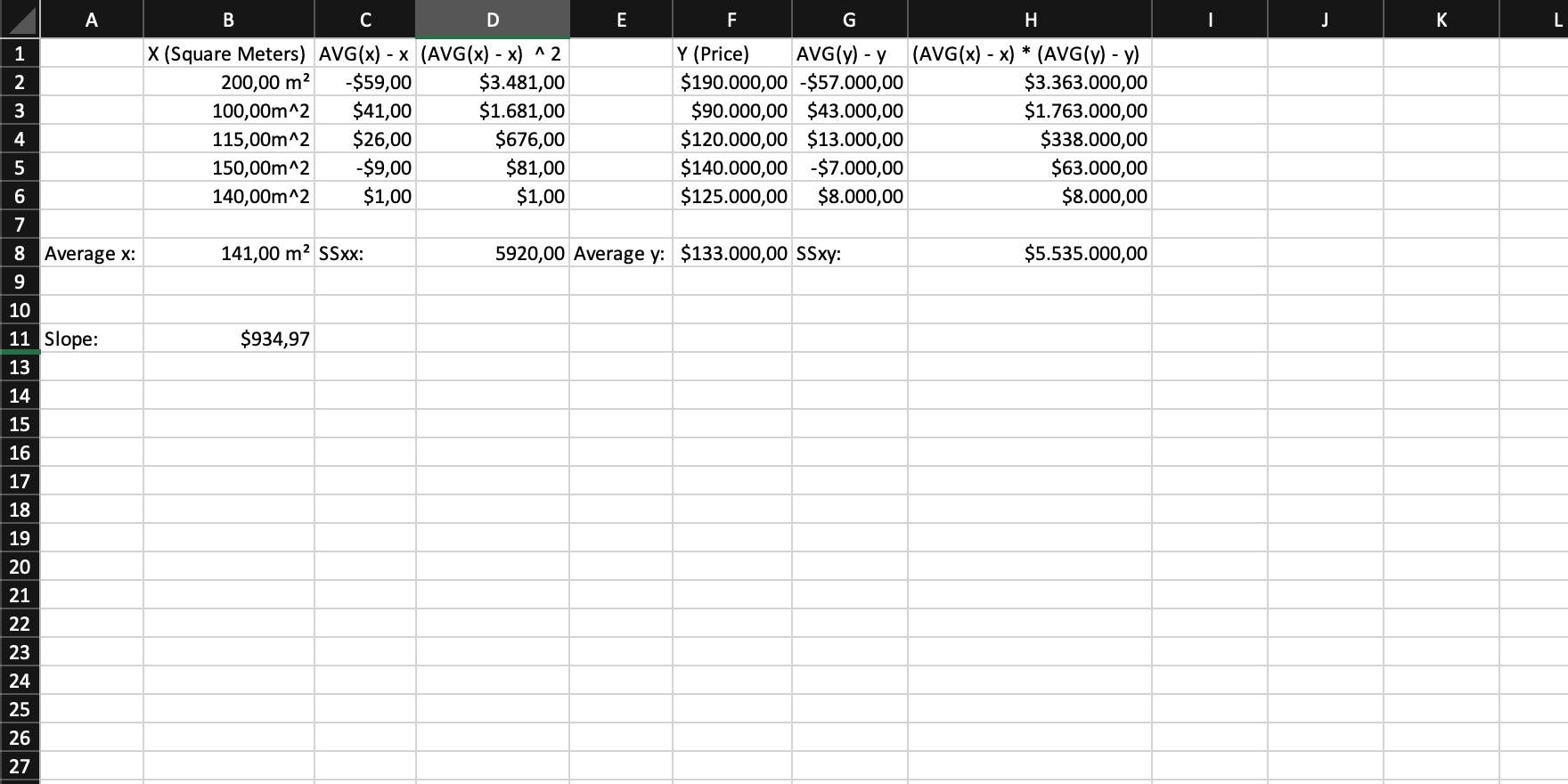

You can now calculate the slope, using SSxx and SSxy with the following formula: slope = SSxy / SSxx = m.

Step 8

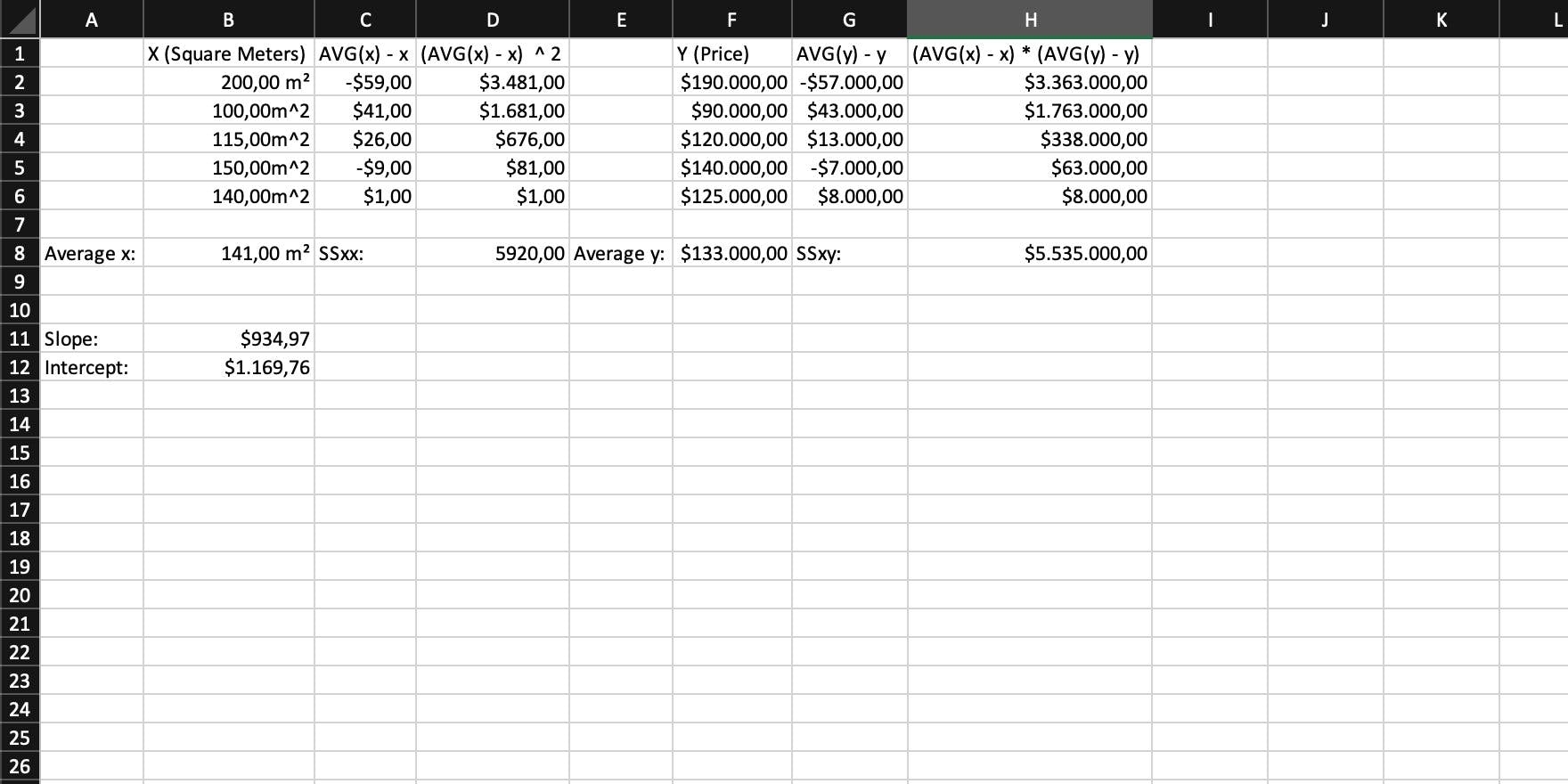

The last thing to do is calculating the intercept with the formula: intercept = AVG(y) - slope * AVG(x) = b.

Step 9

You're finished. Simply put everything together and you have your linear function: y = intercept + slope * x = 1169.76 + 934.97 * x.

Implementing the simple linear regression in JavaScript

Until now, everything you did was based on Excel. But it's way more fun to implement something in a real programming language. And this language is JavaScript.

The goal is to create a function that does the linear regression and then returns a function with the specific formula for the given input coded into it.

Going Into Code

Let's assume that your input is an array of objects.

Each object has the following two properties:

- squareMeters

- priceInDollars for easier access later.

(You could also use a 2-dimensional array.)

const inputArray = [

{

squareMeters: 200,

priceInDollars: 190000

},

{

squareMeters: 100,

priceInDollars: 90000

},

{

squareMeters: 115,

priceInDollars: 120000

},

{

squareMeters: 150,

priceInDollars: 140000

},

{

squareMeters: 140,

priceInDollars: 125000

}

];

The first step is to create a function and split your input array into two arrays, each containing either your x or your y values.

These are the split base arrays that all further operations will be based on, and with the format above chosen, it makes sense to create a function that works for more scenarios than only the one you're handling here.

By using dynamic property access, this function is able to do a linear regression for any array that contains objects with two or more properties.

function linearRegression(inputArray, xLabel, yLabel) {

const x = inputArray.map((element) => element[xLabel]);

const y = inputArray.map((element) => element[yLabel]);

}

Based on the first array, your x, you can now sum all values up and calculate the average. A reduce() on the array and dividing that result by the array's length is enough.

const sumX = x.reduce((prev, curr) => prev + curr, 0);

const avgX = sumX / x.length;

Remember what you did next when you worked in Excel? Yep, you need the difference of each individual x to the average and that squared.

const xDifferencesToAverage = x.map((value) => avgX - value);

const xDifferencesToAverageSquared = xDifferencesToAverage.map(

(value) => value ** 2

);

And those squared differences now need to be summed up.

const SSxx = xDifferencesToAverageSquared.reduce(

(prev, curr) => prev + curr,

0

);

Time for a small break. Take a deep breath, recap what you have done until now, and take a look at how your function should now look like:

function linearRegression(inputArray, xLabel, yLabel) {

const x = inputArray.map((element) => element[xLabel]);

const y = inputArray.map((element) => element[yLabel]);

const sumX = x.reduce((prev, curr) => prev + curr, 0);

const avgX = sumX / x.length;

const xDifferencesToAverage = x.map((value) => avgX - value);

const xDifferencesToAverageSquared = xDifferencesToAverage.map(

(value) => value ** 2

);

const SSxx = xDifferencesToAverageSquared.reduce(

(prev, curr) => prev + curr,

0

);

}

Half of the work is done but handling y is still missing, so next, you need the average of y.

const sumY = y.reduce((prev, curr) => prev + curr, 0);

const avgY = sumY / y.length;

Then, similar to x, you need the difference of each y to the overall average of y.

const yDifferencesToAverage = y.map((value) => avgY - value);

The next step is to multiply the difference of each x and y respectively.

const xAndYDifferencesMultiplied = xDifferencesToAverage.map(

(curr, index) => curr * yDifferencesToAverage[index]

);

And then, you can calculate SSxy, which is, like SSxx a sum.

const SSxy = xAndYDifferencesMultiplied.reduce(

(prev, curr) => prev + curr,

0

);

With everything in place, you can now calculate the slope and the intercept of the straight line going through the cloud of points.

const slope = SSxy / SSxx;

const intercept = avgY - slope * avgX;

And the last thing to do is to return the function that has the specific formula for this input coded into it, so a user can simply call it.

Your function should now look like this:

function linearRegression(inputArray, xLabel, yLabel) {

const x = inputArray.map((element) => element[xLabel]);

const y = inputArray.map((element) => element[yLabel]);

const sumX = x.reduce((prev, curr) => prev + curr, 0);

const avgX = sumX / x.length;

const xDifferencesToAverage = x.map((value) => avgX - value);

const xDifferencesToAverageSquared = xDifferencesToAverage.map(

(value) => value ** 2

);

const SSxx = xDifferencesToAverageSquared.reduce(

(prev, curr) => prev + curr,

0

);

const sumY = y.reduce((prev, curr) => prev + curr, 0);

const avgY = sumY / y.length;

const yDifferencesToAverage = y.map((value) => avgY - value);

const xAndYDifferencesMultiplied = xDifferencesToAverage.map(

(curr, index) => curr * yDifferencesToAverage[index]

);

const SSxy = xAndYDifferencesMultiplied.reduce(

(prev, curr) => prev + curr,

0

);

const slope = SSxy / SSxx;

const intercept = avgY - slope * avgX;

return (x) => intercept + slope * x;

}

Well, that's a working function. You could call it now, and it would work well.

const linReg = linearRegression(inputArray, "squareMeters", "priceInDollars");

console.log(linReg(100); // => 94666.38513513515

What's Next

That function still has a lot of potential for some refactoring. There is a lot of repetition in it, and if you really were to use this function on large datasets, some performance optimizations are most likely needed, but it shall be enough for you to understand how relatively straight-forward it is to implement a linear regression in JavaScript. Because in the end, it is only some applied math.

You can, however, continue from this point on, if you wish to. Refactor that function, optimize its performance, increase the overall maintainability, and write some tests for it. That's a great practice for your skills for sure.

Before You Leave

If you like my content, visit me on Twitter, and perhaps you’ll like what you see.